The net’s long decline into “five giant websites, each filled with screenshots of the other four” isn’t a mystery. Nor was it by any means a forgone conclusion. Instead, we got here through a series of conscious actions by big businesses and lawmakers that put antitrust law into a 40-year coma. Well, now antitrust is rising from its slumber and we have work for it to do.

As regulators and lawmakers think about making the internet a better place for human beings,their top priority should be restoring power to users. The internet’s promise was that it would remove the barriers that stood in all our way; distance, sure, but also the barriers thrown up by large corporations and oppressive states. But the companies gained a toehold in that environment of lowered barriers, turned right around, and put up fresh barriers of their own. That trapped billions of us on platforms that many of us do not like but feel we can’t leave.

Platforms follow a predictable lifecycle: first, they offer their end-users a good deal. Early Facebook users got a feed consisting solely of updates from the people they cared about, and promises of privacy. Early Google searchers got result screens filled with Google’s best guess at what they were searching for, not ads. Amazon once made it easy to find the product you were looking for, without making you wade through five screens’ worth of “sponsored” results.

The good deal for users is only temporary. Platforms today use a combination of tools, including taking advantage of collective action problems, “Most Favored Nation” clauses, collusive back-room deals to block competitors, computer crime laws, and Digital Rights Management to lock their users in. Once those users are firmly in hand, the platforms degrade what made users choose the platform in the first place, making the deal worse for them in order to attract business customers. So instead of showing you the things you asked for, your time and attention is sold to businesses by platforms.

For example, Facebook broke its promise not to spy on users, created a massive commercial surveillance system, and sold cheap, reliable targeting to advertisers. Google broke its promise not to pollute its search engine with ads and offered great deals to advertisers. Amazon offered below-cost shipping and returns to platform sellers and later shifted the cost onto those sellers. YouTube offered reliable, lucrative income streams to performers and many responded by building their businesses on the platform.

The good deal for business customers is no more permanent than the good deal for end users; once business customers are likewise dependent on a platform, the platform’s generosity ends and it starts clawing back value for its shareholders. Facebook rigs its ad market to rip off publishers and advertisers, Apple hikes the fees it charges app makers, and Amazon follows suit, until more than half the price of the third-party goods you buy on Amazon is consumed by junk fees; Google fires 12,000 workers after a stock buyback that would have paid all their salaries for the next 27 years, even as its search quality degrades and its results pages are overrun by fraud. A platform where nearly all the value has been withdrawn from users and business customers lives in a state of fragile equilibrium, trapped in a cycle of degrading service, increased regulatory scrutiny after privacy violations, moderation scandals, and other highly public failings, and a widening gyre of scandals and bad press. Its value is only in its monopoly.

Amidst this bumper crop of tech scandals, lawmakers and regulators are seeking ways to protect internet users. That’s a good priority to have: platforms will rise and fall. They should, in fact, if they fail to offer anything of actual value to their users. They don’t need our protection. It’s users we should be thinking of.

Protecting users from platform degradation starts with giving users control: control over their digital lives. Users deserve to be protected from deceptive and abusive platform rules, users deserve to have alternatives to the platforms they use now. Finally, users deserve the right to use those alternatives, without having to pay a heavy price caused by artificial technical or legal barriers to switching.

Two Big Picture Principles For Protecting Users From Platform Degradation

We're in the midst of a long-overdue move to regulate and legislate over platform abuses. Each platform has its own technical ins-and-outs, and so any policy to protect users of a given platform must employ a finely detailed analysis to make sure it does what it’s supposed to do. Experts like EFF live in these details, but ultimately even those details are determined by big picture concerns.

Below, we set out two of those big-picture principles that we think are both critical to protecting users and, compared to many other options, easier to implement.

How these principles are implemented could vary—they may be embodied directly in legislation if done carefully, enforced in specific settlements with regulators or in litigation, or, better yet, voluntarily adopted by platforms, technologists, or developers, or enforced by investors or other kinds of funders (nonprofit or municipal, for instance). They are intended as a framework for evaluating those fine-grained solutions, not to judge whether they are technically effective, but rather, whether they are effective ways to build a public interest internet.

Principle 1: End-to-End: Connecting Willing Listeners With Willing Speakers

The End-to-End Principle is a bedrock idea underpinning the internet, the idea that the role of a network is to reliably deliver data from willing senders to willing receivers. This idea has taken on many guises over the decades since it was formalized in 1981. A familiar example is the idea of network neutrality, which states that your ISP should send you the data you ask for as quickly and reliably as it can. For example, a neutral ISP uses the same “best-effort” to deliver the videos you request, while a non-neutral ISP might only use best-effort to deliver the videos from its own affiliated streaming service, while slowing down the videos served by its rivals.

You signed up with your ISP to get the content and connections you want, not the ones the ISP’s investors wish you’d asked for.

We think that a version of the end-to-end principle has a role to play in the “service layer” of the internet as well, whether that’s in social media, search, e-commerce, or email. Some examples include:

- Social media: If you subscribe to someone’s feed, you should see the things they post. Performers shouldn’t have to outguess and work around the opaque rules of a content recommendation system to get the fruits of their creative labor in front of the people who asked to see them. Recommendation systems have a place, but there should always be a way for social media users to see the updates posted by the people they care enough about to follow, without having to wade through posts by people the platform wants (or is being paid to) promote.

- Search: If a search engine has an exact match for the thing you’re searching for—for example, a verified local merchant listing or a single document with the exact title you’re seeking, or a website whose name matches your search term, that result should be at the top of the results screen—not multiple ads for lookalike businesses, or worse, scam sites pretending to be lookalike businesses and paying to go above the best match.

- E-Commerce: If an e-commerce platform has an exact match for the product you’ve searched for—either by name or part/model number—that result should be the top result for your search, above the platform’s own equivalent products, or “sponsored” results for lookalike products.

- Email: If you mark a sender as trusted, their email should never go to a spam folder (however, it’s fine to add warnings about malicious attachments or links to messages flagged by scanners). It should be easy to mark a sender as trusted. Senders in your address book should automatically be trusted.

Principle 2: Right of Exit: Treating Bad Platforms As Damage and Routing Around Them

Plenty of people don’t like big platforms but feel they can’t leave. Social media users are locked in thanks to the “collective action problem” of convincing all their friends to leave and agreeing on where to go. Performers and creators are locked in because their audiences can’t follow them to new platforms without losing the media they’ve paid for (and audiences can’t leave because the creators they enjoy are stuck on the platforms).

Making it easier for platform users to go elsewhere has two important effects: it disciplines platform owners who are tempted to shift value from users to themselves, because they know that making their platforms worse, such as by allowing harassment and scams, or by increasing surveillance, raising prices or accepting invasive advertising, will precipitate a mass exodus of users who can leave without paying a high price.

Just as important: if platforms aren’t disciplined by this threat, then users can leave, treating the bad platform as damage and routing around it. That is the part of the free market that these companies always try to forget: consumers are supposed to be able to vote with their feet. But if you will lose contact with your friends and family if you leave a terrible service, you can't really choose the better option.

What’s more, a world where hopping platforms is easy is a world where tinkerers, co-ops, and startups have a reason to build alternatives that users can hop to.

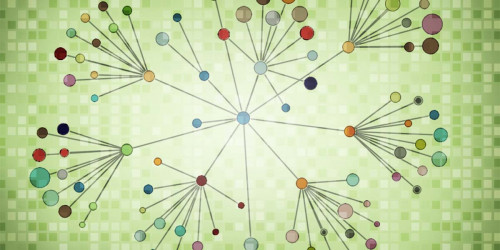

Social media: “Interoperable” social media platforms connect to one another, allowing users to exchange messages and participate in communities from “federated” servers that each have their own management, business model, and policies. Services based on the open ActivityPub service (like Mastodon) are designed to make switching easy: users need only export their list of followers and the accounts they follow and upload them to another server, and all those social connections are re-established with just a few clicks (Bluesky, a new service that boasts of its federation capabilities but is thus far limited to a single server, has a similar function). This ease of switching makes users less reliant on server operators. If your server operator fosters a hostile community, or simply pulls the plug on their server, you can easily re-establish yourself on any other server without sacrificing your social connections. Existing data-protection laws like the CCPA and GDPR already require online service providers to turn over your data on demand; that data should include the files needed to switch from one server to another. Regulators seeking to improve the moderation practices of large social media platforms should also be securing the right of exit for platform users. Sure—let’s make the big platforms better, but let’s also make it easier to walk away from them.

DRM-locked media: Most of the media sold by online stores is encumbered with “Digital Rights Management” technology that prevents the people who buy it from playing it back using unauthorized tools. This locks audiences to platforms because breaking up with the platform means throwing away the media you’ve purchased. It also locks performers and creators to those platforms, unable to switch to rivals that treat them better and pay them more, because their audiences can’t follow them without forfeiting those earlier purchases. Applied to media, the “right of exit” would require platforms to facilitate communications between buyers and creators of media. A creator who switched to a rival platform could use this facility to provide all purchasers of their works with download codes for a new platform, which would be forwarded by the old platform to the creator’s customers. Likewise, customers who switched to another store could send messages via the platform to creators asking for download codes on the new service.

What Do These Two Principles Give Us?

Reasonable Administrability

For decades, failures in tech regulation have been blamed on technology’s speed and regulation’s slowness—we’re told that regulators just can’t keep up with tech.

But tech regulation doesn’t have to be slow. Some proposed tech regulations—like rules requiring platforms to provide full explanations for content moderation and account suspension decisions, or to prevent bullying and harassment—will always be slow, because they are “fact-intensive.”

A rule requiring action on harassment needs: a definition of harassment, an assessment of whether a given action constitutes harassment, and an assessment of whether the action taken was sufficient under the rule. These are the kinds of things that people of good faith could argue about for years, and that people of bad faith could argue about forever.

Furthermore, even once definitions of these things are agreed on, it will take a long time to see the effects in practice and evaluate if they are working as intended.

By contrast, “end-to-end” and “right of exit” are easy to administer, because you will know instantly if the principles are being followed. If we tell social media platforms that they must deliver posts to your followers, we can tell whether that’s happening by making some test posts and checking whether they’re delivered. Same for a rule requiring search tools and e-commerce sites to prioritize exact matches—just do a search and see if the exact match is at the top of the screen.

Likewise for right-of-exit: if a Mastodon user claims they weren’t given the data needed to transfer to another server, the question can be easily settled by the old server owner handing over that data.

There are many important policy priorities that are fact-intensive and hard to administer.. The more administrable our other policies are, the more capacity we’ll have to dive into those urgent, gnarly problems.

Avoiding the Creation of Capital Moats

Rules that are expensive to comply with can be a gift to big companies. If we make copyright filters mandatory for online services, we are effectively saying that no one can create an online service unless they have $100 million lying around to stand up a filter. Or we are saying that startups should have to pay those companies rents to use their filter technology. If we make rules that assume that every online service is a Big Tech company, we make it impossible to shrink Big Tech.

End-to-end and right of exit are cheap policies, cheap enough to apply to small companies, individual hobbyists, and also very large, incumbent firms.

Services like Mastodon are already end-to-end: complying with an end-to-end rule would simply require Mastodon server operators not to rip out the existing end-to-end feature; rather, any recommendation/ranking system for Mastodon would have to exist alongside of the current system. For large services like Instagram, Facebook and Twitter, end-to-end would mean restoring the simplest form of feed: a feed just of the accounts you actively chose to follow.

Same for right of exit: this is already supported in modern, federated systems, so no new work would have to be done by people running these server tools; rather, their only burden would be to respond in a timely fashion to user requests for their data. These are handled automatically, but might require manual work if the operator decides to kick a user off the service or shut the service down.

For Big Tech incumbents, adding a right of exit means implementing an open standard that already has reference libraries and implementations.

A Public-Interest Internet

We want a web where users are in control. That means a web where we freely choose our online services from a wide menu and stay with them because we like them, not because we can’t afford to leave. We want a web where you get the things you ask for, not the things that corporate shareholders would prefer that you’d asked for. We want a web where willing listeners and willing speakers, willing sellers and willing buyers, willing makers, and willing audiences are all able to transact and communicate without worrying about their relationships being held hostage or disrupted to cram “sponsored posts” into their eyeballs.

Platform decay is the result of firms undisciplined by either competition or regulation and thus free to abuse their users and business customers without the fear of defection or punishment. Creating policies to give platform users a fair shake and the ability to leave will require a lot of attention to detail—but all that detail needs to be guided by principles, north stars that help keep us on the path to a better internet.