This post has been updated to provide additional context about patents and patent applications, which are indications of an entity’s interest in a particular product but not proof that the product is currently in development or available for use. You can read more about the role of patents in this series in our post, “The Catalog of Carceral Surveillance: Patents Aren't Products (Yet)”

There are too many people in U.S. prisons. Their guards are overworked, underpaid, and prone to human errors, and they require work breaks and food, paychecks and sick days. Plus, they possess flaws that can lead to outbursts of violence, racism, and sexual harassment. Some have taken correctional staff shortages as an indication we need to rework our criminal justice system. In patent filings, prison technology company Global Tel*Link has an alternative suggestion: Robots.

Federal officials and correctional departments nationwide have cited lack of staff as one of the biggest contributors to inmate and employee safety, and as articulated in its patent, GTL has imagined a future where coordinating robots, outfitted with biometric sensors and configured to identify “events of interest,” will help to fill the gap.

Notorious for overcharging inmates for phone calls, GTL, like its major competitor Securus, has been dreaming up new offerings since federal efforts to rein in prison phone costs.

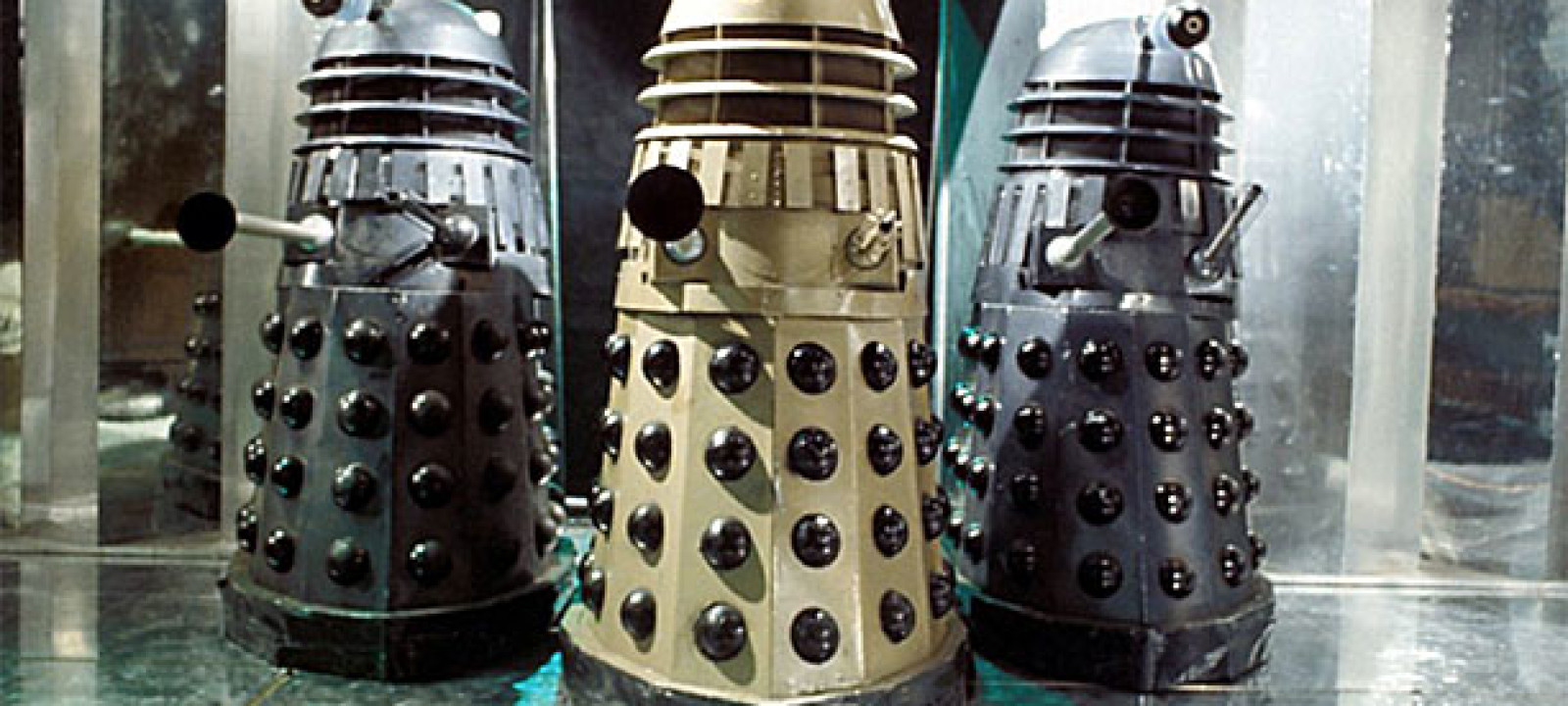

We haven’t seen any Robo-Guards roaming the prison hallways yet, and it’s important to remember that patent filings reflect ideas that may never become tangible products. Given that, we asked GTL whether it was planning to build the robot described in its patent or if it had dropped the idea. GTL declined to comment for this series. Until GTL commits to not building this robot, we’ll remain cautious of cost-saving plans that involve outsourcing “enforcement activities” to Dalek-like apparati.

It’s a trash can empowered to decide if you get your commissary items or an electroshock. What could go wrong?

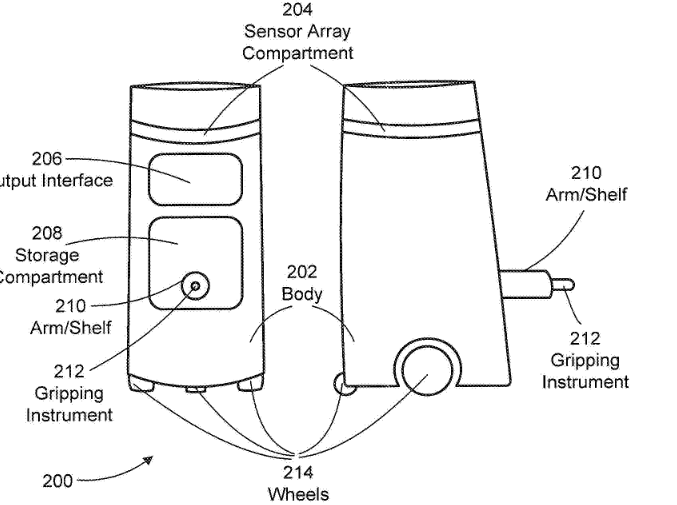

These robots, according to the patent application, could perform many of the same responsibilities as human prison guards with the help of integration of biometric sensors, like fingerprint and iris scanners, microphones, or even face recognition. The robot could be expected to authenticate packages or individuals before escorting them to other parts of a facility. Or it could be programmed to patrol a particular path, collecting and monitoring audio and video for suspicious words or actions.

Outfitted with tools of supposedly “non-lethal force,” the robot could then be configured to deploy, “autonomously” or “at the remote direction of a human operator,” “an electro-shock weapon, a rubber projectile gun, gas, or physical contact by the robot with an inmate.”

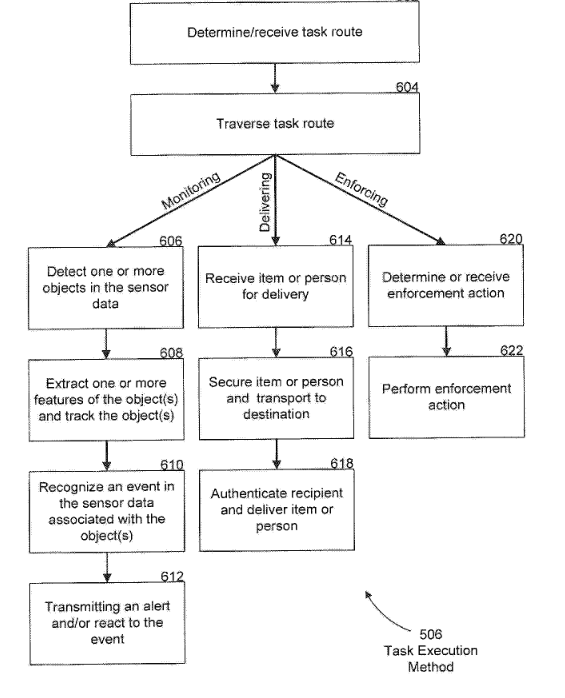

[caption caption=" The flowchart that GTL robots would use to make decisions. “Enforcement action” here is a euphemism for potentially lethal disciplinary methods including rubber bullets and electrical shock."]

Why we’re concerned about potentially automated enforcement robots

GTL hasn’t explicitly said that it would be integrating artificial intelligence into its robots, but its patent does imagine that the robots would “have intelligent...communications with inmates and guards.”

According to the patent:

In one embodiment, mobile correctional facility robot can include hardware and software that allow mobile correctional facility robot to have intelligent and realistic communications with inmates and guards in the correctional facility. This capability of mobile correctional facility robot can be used to take orders from inmates or guards (e.g. , orders for goods from the correctional facility commissary), to answer general questions of inmates or guards, or to relay electronic messages between inmates and guards or between guards.

If this prison robot becomes reality and relies on artificial intelligence, we would have many, many concerns.

Artificial intelligence is only as good as its training data. In a lot of cases, the training data is not very good. This is, in part, because humans themselves are often not very good at making choices and haven’t yet been able to accurately train AI to identify movements, people, and other objects. AI also tends to reflect cultural biases and if the people who are creating the training data tend to view people of color as more intimidating, then this bias will be infused into the AI as well. All of this contributes to why we don’t want to see a system where a robot might be evaluating a scene for a possibly forceful response.

Depending on the data set such an AI is trained on, it might decide that a hug is a threatening gesture, or a fist bump, or a high five. If someone were to trip and fall that might be seen as a threatening gesture by the AI. The “Central Controller,” cited multiple times in the GTL patent, can direct multiple robots to work together. GTL has said little on it, and this central controller could be human-powered or use some form of artificial intelligence to direct multiple robots to work together as they monitor an area for bad words or perform an “enforcement action.” In a version of not-quite-AI, the patent describes giving the robots the power to monitor for “events of interest.” The robots may identify a predetermined spoken word or behavior as a cause for reasonable suspicion,a dangerous way to try to identify a proxy for crime that will also end up capturing a lot of innocuous activity.

There are, of course, many activities that, without context, might seem strange, perhaps even threatening:

- sitting in a strange position,

- having a non-violent psychological episode, or

- holding a threatening broom while performing assigned cleaning duties.

I’m afraid I can’t let you buy peanuts from the commissary, Dave.

You will comply.

GTL isn’t the first company to consider making Robo-Patrols a reality. Guard robots created by another company, Knightscope, have been deployed in parks, garages, and other public areas, highlighting other potential problems and risks with these employees: drowning, stairs, blindness, or interference with their LIDAR-based navigation systems.

A Knightscope robot drowns itself after learning it won’t be receiving a paycheck this month.

While there is a well-known issue with incarceration in this country, the solution, we think, is not more AI-enabled robots.

An earlier version of this article incorrectly identified the owner of the patent as Securus, rather than GTL. EFF apologizes for the error.