In the first weeks of the COVID-19 pandemic, a location data broker called Veraset offered officials in Washington, DC full access to its proprietary database of “highly sensitive” device-level GPS data, collected from cell phones, for the entire DC metro area.

The officials accepted the offer, according to public records obtained by EFF. Over the next six months, Veraset provided the District with regular updates about the movement of hundreds of thousands of people—cell phones in hand or tucked away in backpacks or pockets—as they moved about their daily lives. The DC Office of the Chief Technology Officer (OCTO) and The Lab @ DC, a division of the Office of the City Administrator, accepted the data and uploaded it to the District’s “Data Lake,” a unified system for storing and sharing data across DC government organizations. The dataset was only authorized for uses related to COVID research, and there’s no evidence that it has been misused. But it's unclear to what extent the policies in place bind the use or sharing of the data within the DC government.

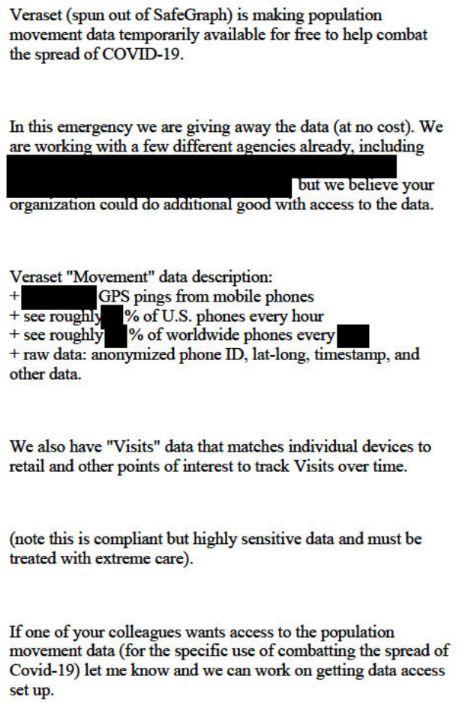

This is far from the only instance of data sharing between private location data brokers and government agencies. Reports at the beginning of the pandemic indicated that governments around the world began working with data brokers, and in the documents we obtained, Veraset said that it was already working with “a few different agencies.” But to our knowledge, these documents are the first to detail how Veraset shared raw, individually-identifiable GPS data with a government agency. They highlight the scope and sensitivity of highly-invasive location data widely available on the open market. They also demonstrate the risk of “COVID-washing,” in which data brokers might try to earn goodwill by giving away their hazardous product to public health officials during a health crisis.

When asked to comment on the relationship, Sam Quinney, director of The Lab @ DC, gave the following statement:

DC Government received an opportunity from Veraset to analyze anonymous mobility data to determine if the data could inform decisions affecting COVID-19 response, recovery, and reopening. After working with the data, we did not find suitable insights for our use cases and did not renew access to the data when our agreement expired on September 30, 2020. The dataset was acquired for no cost and is scheduled to be deleted on December 31, 2021.

Acquisition

Veraset is a data broker that sells raw location data on the open market. It’s a spinoff of the more-publicized data broker Safegraph, which was recently banned from the Google Play store. (We’ve previously reported on Safegraph’s own relationships with government.) While Safegraph courts publicity by publishing blog posts, hosting podcasts about the data business, and touting its own work on COVID, Veraset has received comparatively little attention. The company’s website pitches its data products to real estate companies, hedge funds, advertising agencies, and governments.

A March 30, 2020 email from Veraset to DC about “Movement” and “Visits” data

Between April and September 2020, Veraset provided regular updates of raw cell-phone location data to the District, which then uploaded it to the “data lake” for further processing in conjunction with the Department of Health. Veraset offered the District access to both its “Movement” and “Visits” datasets for the DC metro area. According to Veraset literature located on data broker clearinghouse Datarade, “Movement” contains “billions” of raw GPS signals. Each signal contains a device identifier, timestamp, latitude, longitude, and altitude.

“Visits” is a more processed version of the data in Movement, which attributes device identifiers to specific, named locations. According to Veraset:

Our proprietary machine learning model merges raw GPS signal with precise polygon places to understand which anonymous device visited which POI at which time. The result is reliable, accurate, device-level visits, refreshed, and delivered daily. In other words, “X anonymous device id visited Y Starbucks at Z date and time.”

Veraset says its datasets contain only “anonymous device IDs” and do not contain personally-identifying information. But the so-called “anonymous” ID in question is the advertising identifier, the persistent string of letters and numbers that both iOS and Android devices expose to app developers. These ad IDs are not really anonymous: an entire industry of “identity resolution” services link ad IDs to real identities at scale. Moreover, the nature of location data makes it easy to de-anonymize, because a comprehensive location trace reveals where a person lives, works, and spends time. Often, all it takes to link a location trace to a real person is an address book. Although Veraset does not sell real names or phone numbers, its data is far from anonymous.

Information about exactly how many people are swept up in the dataset was redacted from the records we received. In our previous reporting on Illinois, Safegraph had sold access to data about 5 million monthly active users—over 40% of the state’s population. Elsewhere, Veraset advertises that its dataset contains “10+% of the US population” sourced from “thousands of [phone] apps and SDKs [software development kits].” Neither Veraset nor Safegraph disclose which specific mobile apps they collect data from, making it nearly impossible for most users to know whether their data ends up on the companies’ servers.

Sharing and Retention

Washington, DC’s use of the data is governed in part by a “Data Access Agreement,” written by Veraset and signed by the District. This agreement forbids the District from using the data for anything other than specified research without Veraset’s consent. Veraset provided the data for the District to download in bulk via Amazon Web Services. Updates were delivered approximately once a day, and contained data at a 24 to 72 hour delay from real time - in other words, data about where someone went on Monday could be shared with DC on Tuesday. District officials regularly transferred new data to DC’s “data lake,” where it remains today, more than a year after the relationship with Veraset ended. According to the District, the data is scheduled to be deleted at the end of 2021.

OCTO publishes a privacy policy applying to the data lake, which divides data into five distinct levels of sensitivity, numbered 0 through 4. The data acquired from Veraset, which the company described as “highly sensitive,” are categorized as “Level 3 - Confidential." This means the GPS data are encrypted at rest and in transit and that they’re inaccessible via FOIA. However, the policy is vague about how and with whom the data can be shared, stating only that other District agencies need to be “specifically authorized” to access it. In response to questions, DC officials said that that the data “was never shared in its raw state with anyone other than the authorized Lab staff and OCTO Data Lake staff.” But it remains unclear whether District law enforcement agencies are allowed to access the data, and what—if any—legal approval they’d need to do so.

Excerpt from OCTO’s “District of Columbia Data Policy”

District documents state that a “universal data sharing agreement” and “privacy impact assessment” for the data lake are in progress, but have not been completed yet.

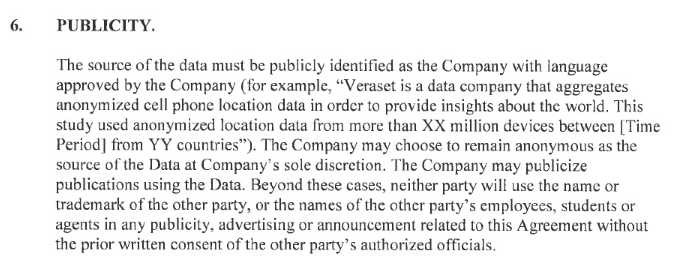

Veraset Controlled Public Relations

Another feature of the Data Access Agreement is laid out in section 6, “Publicity.” This paragraph gives Veraset control over how the District may, or must, disclose Veraset’s involvement in any publications derived from the data. With this clause, Veraset asserted the right to approve any language that the District might use to disclose the broker’s involvement with its research. Furthermore, it reserved the right to remain “anonymous” as the source of the District’s data if it chose.

Agreement by DC to allow Veraset to control its public statements about the data

This agreement gives Veraset some power to control how DC can, or can’t, discuss its data. This appears to be part of a pattern. Though it generally gets little publicity, Veraset has received favorable mentions from trusted institutions, like university researchers and departments of health. For example, the company’s data has been credited in Veraset-friendly language by dozens of academic publications over the past two years. The Data Access Agreement between Veraset and DC may shed light on how Veraset secures such endorsements.

Was it worth it?

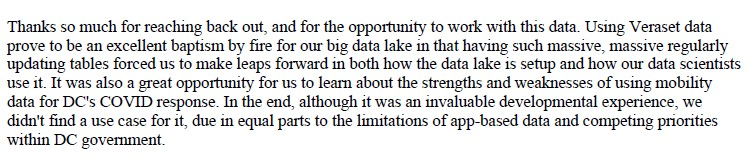

Although DC officials were happy to accept the “free trial” of Veraset data, they declined to sign a new contract with the provider after six months. According to the records we obtained, officials told Veraset that they “didn’t find a use case” for the data, due in part to “the limitations of app-based data.” The District continued to rely on a more privacy-protective, though still controversial, dataset from Google spin-off Replica. But Lab @DC director Sam Quinney was effusive in thanking Veraset for providing “such massive, massive regularly updating” datasets.

Email from DC stating it “didn’t find a use case” for Veraset’s “massive, massive” datasets.

Early in the pandemic, we wrote that governments shouldn’t turn to location surveillance to fight the virus, because they have not shown it would actually advance public health and thus justify the harms to civil rights and civil liberties. App-based location data in particular suffers from inaccuracy and bias that make it ill-suited to many public health uses. After more than 20 months of experimenting with intrusive location data to combat COVID, like the “massive, massive” datasets provided by Veraset to DC, governments have still not shown that this extraordinary invasion of privacy is justified by real impacts on the spread of disease.

Lessons Learned

Washington, DC is far from the only government which accepted this kind of deal from a data broker, and there’s no evidence that officials used it for anything other than COVID research. But that doesn’t make it okay. Veraset’s data is harvested from users without meaningful consent, and is monetized by giving corporations and businesses detailed information about the day-to-day movements of millions of people. At a minimum, DC should have performed more vetting of Veraset’s sources, implemented stronger privacy protections, and justified the acquisition of such sensitive data.

Governments must think twice before acquiring sensitive personal data from private companies that violate our privacy for profit. Even when money doesn’t change hands, deals between governments and data brokers erode our privacy rights and can provide cover for a shadowy, exploitative industry. Furthermore, government agencies that acquire sensitive personal data for one purpose, like public health, must have clear and specific policies that prevent excessive retention, sharing, or use. It is especially important that police and immigration enforcement officials not have access to public health data. These policies should be completed, and made public, before the agencies begin uploading sensitive data to their servers.

More importantly, this kind of data should not be for sale in the first place. Location brokers generally harvest phone app location data from users without meaningful consent, and Veraset does not disclose with specificity where it gets its data, making it nearly impossible for users to know whether they are captured in the company’s dragnet. Users derive no benefit from brokers’ exploitation of their private lives.

Thanks to the California Consumer Privacy Act, people in California can opt out of Veraset’s sale of their data using this form. But it shouldn’t be this difficult - users who don’t know Veraset exists should still be protected from its spying.

We need laws that rein in the rampant collection and sale of intimate data. That means effective consumer privacy legislation that requires companies to get real consent from users before collecting their data at all, and prevents them from harvesting data for one purpose but then selling or monetizing it in other ways. Finally, we need to prevent governments from acquiring data that should be protected by the Fourth Amendment on the open market.