Copyright Bots Aren’t Always Bad, But They Shouldn’t Be in Charge

In 2007, Google built Content ID, a technology that lets rightsholders submit large databases of video and audio fingerprints and have YouTube continually scan new uploads for potential matches to those fingerprints. Since then, a handful of other user-generated content platforms have implemented copyright bots of their own that scan uploads for potential matches.

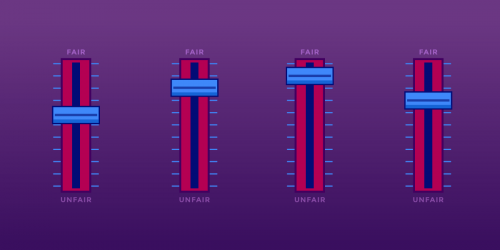

Platforms have no obligation to seek out and block infringing content, and such an obligation would entrench existing providers by stifling new platforms before they could achieve popularity. But if a large platform decides it’s in its interests to evaluate every item of user material for potential infringement, the process probably must be automated—at least in part. The problem comes when humans fall out of the picture. Machines are good at many things—making the final determination on your rights isn’t one of them.

Fair Use is Free Speech

U.S. copyright law recognizes a key limitation on the powers given to copyright owners: fair use. Fair use protects a wide range of completely valid uses of copyrighted works, uses that are not considered copyright infringement.

Part of why we take fair use so seriously here at EFF is that it’s an essential part of protecting free speech. A law that says you can’t use a certain work without the author’s permission under any circumstances curbs your right to speak about that work or its author or adapt it as part of expressing your own message. When you hear powerful content owners put down fair use—treating it as an obscure loophole in copyright—they’re really trivializing your First Amendment rights.

The Digital Millennium Copyright Act provides for a notice-and-takedown process whereby content owners can disable access to allegedly infringing content. When a takedown happens, the user has the opportunity to appeal it, and when she does, the rightsholder has a limited time to take action against her in a federal court. If the rightsholder doesn’t do anything, the allegedly infringing content is restored. Under the DMCA, platforms that comply with notice-and-takedown are protected from liability for their users’ copyright infringement.

“I Have Never Had a Day Where I Felt Safe Posting One of My Videos”

In September, a federal appeals court confirmed that copyright holders must take fair use into account before sending a takedown notice. That hasn’t stopped many large content owners, though.

Last week, the popular YouTube host Doug Walker released a scathing critique of the way in which YouTube handles DMCA takedown requests. In Walker’s YouTube series “Nostalgia Critic,” Walker discusses old films and TV shows. The clips he uses are very short, and presented for the purpose of criticizing or satirizing them. Most of the time, his fair use case is obvious.

And yet, Walker says, fear of takedowns—and of having to navigate YouTube’s labyrinthine three-strikes system—makes him censor himself every day.

It’s clear that this current system is made to be abused and will continue to be abused until A) it’s fixed; or B) the affected channel gets big enough to give the claimants enough bad press to scare them into not filing the claim. And that shouldn’t be how things are done. I’ve been doing this professionally for over eight years, and I have never had a day where I felt safe posting one of my videos even though the law states I should be safe posting one of my videos.

Most frustrating of all to Walker and the other critics featured in his video, a lot of the time, they’re the only humans who ever see the takedown. When there’s no easy, informal way for the recipient of a takedown notice to discuss the takedown with the rightsholder (a dolphin hotline, as we like to call it), confusion and frustration abound.

Don’t Outsource Fair Use to Robots

But some people want to take takedowns in the opposite direction, to require even less human involvement. In recent months, certain lobbyists have begun advocating for a filter-everything system: under filter-everything, once a takedown notice goes uncontested, the platform would have to filter and block any future uploads of the same allegedly infringing content.

There are a number of problems with filter-everything. It would put a huge burden on content platforms, especially ones that don’t have the resources of YouTube. It could also compromise users’ privacy—see Facebook’s misguided efforts to filter its users’ private video uploads. But worst of all, it would create a situation where most takedowns happen instantly, with no human involvement at all.

Implemented correctly, copyright bots could weed out cases of obvious infringement and obvious non-infringement, letting participants focus their attention on the cases that demand a serious fair use analysis (or, perhaps, to choose not to attempt to censor speech that has a good chance of being non-infringing).

Unfortunately, as the use of copyright bots has become more popular, artists have had to teach themselves tricks to work around the bots’ logic rather than fully enjoy the freedoms fair use was designed to protect.

The beauty of fair use is in its flexibility. Fair use has been interpreted to permit many kinds of innovative, transformative uses of copyrighted works—uses that didn’t exist when the law was written. Fair use doesn’t build a fence around innovation; it lights the way to new possibilities. No machine can do that.

This week is Fair Use Week, an annual celebration of the important doctrines of fair use and fair dealing. It is designed to highlight and promote the opportunities presented by fair use and fair dealing, celebrate successful stories, and explain these doctrines.