The Proposal Is Unfair to Both Users and Media Platforms

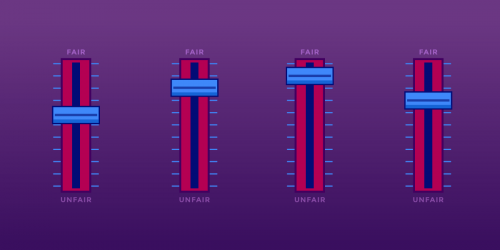

There’s a debate happening right now over copyright bots, programs that social media websites use to scan users’ uploads for potential copyright infringement. A few powerful lobbyists want copyright law to require platforms that host third-party content to employ copyright bots, and require them to be stricter about what they take down. Big content companies call this nebulous proposal “notice-and-stay-down,” but it would really keep all users down, not just alleged infringers. In the process, it could give major content platforms like YouTube and Facebook an unfair advantage over competitors and startups (as if they needed any more advantages). “Notice-and-stay-down” is really “filter-everything.”

At the heart of the debate sit the “safe harbor” provisions of U.S. copyright law (17 U.S.C. § 512), which were enacted in 2000 as part of the Digital Millennium Copyright Act. Those provisions protect Internet services from monetary liability based on the allegedly infringing activities of their users or other third parties.

Section 512 lays out various requirements for service providers to be eligible for safe harbor status—most significantly, that they comply with a notice-and-takedown procedure: if you have a reasonable belief that I’ve infringed on your copyright, you (or someone acting on your behalf) can contact the platform and ask to have my content removed. The platform removes my content and notifies me that it’s removed it. I have the opportunity to file a counter-notice, indicating that you were incorrect in your assessment: for example, that I didn’t actually use your content, or that I used it in a way that didn’t infringe your copyright. If you don’t take action against me in a federal court within 14 days, my content is restored.

The DMCA didn’t do much to temper content companies’ accusations that Internet platforms enable infringement. In 2007, Google was facing a lot of pressure over its recent acquisition YouTube. YouTube complied with all of the requirements for safe harbor status, including adhering to the notice-and-takedown procedure, but Hollywood wanted more.

Google was eager to court those same companies as YouTube adopters, so it unveiled Content ID. Content ID lets rightsholders submit large databases of video and audio fingerprints. YouTube’s bot scans every new upload for potential matches to those fingerprints. The rightsholder can choose whether to block, monetize, or monitor matching videos. Since the system can automatically remove or monetize a video with no human interaction, it often removes videos that make lawful fair uses of audio and video.

Now, some lobbyists think that content filtering should become a legal obligation: content companies are proposing that once a takedown notice goes uncontested, the platform should have to filter and block any future uploads of the same allegedly infringing content. In essence, content companies want the law to require platforms to develop their own Content-ID-like systems in order to enjoy safe harbor status.

For the record, the notice-and-takedown procedure has its problems. It results in alleged copyright infringement being treated differently from any other type of allegedly unlawful speech: rather than wait for a judge to determine whether a piece of content is in violation of copyright, the system gives the copyright holder the benefit of the doubt. You don’t need to look far to find examples of copyright holders abusing the system, silencing speech with dubious copyright claims.

That said, safe harbors are essential to the way the Internet works. If the law didn’t provide a way for web platforms to achieve safe harbor status, services like YouTube, Facebook, and Wikipedia could never have been created in the first place: the potential liability for copyright infringement would be too high. Section 512 provides a route to safe harbor status that most companies that use user-generated content can reasonably comply with.

A filter-everything approach would change that. The safe harbor provisions let Internet companies focus their efforts on creating great services rather than spend their time snooping their users’ uploads. Filter-everything would effectively shift the burden of policing copyright infringement to the platforms themselves, undermining the purpose of the safe harbor in the first place.

That approach would dramatically shrink the playing field for new companies in the user-generated content space. Remember that the criticisms of YouTube as a haven for infringement existed well before Google acquired it. The financial motivators for developing a copyright bot were certainly in place pre-Google too. Still, it took the programming power of the world’s largest technology company to create Content ID. What about the next YouTube, the next Facebook, or the next SoundCloud? Under filter-everything, there might not be a next.

Here’s something else to consider about copyright bots: they’re not very good. Content ID routinely flags videos as infringement that don’t copy from another work at all. Bots also don’t understand the complexities of fair use. In September, a federal appeals court confirmed that copyright holders must consider fair use before sending a takedown notice. Under the filter-everything approach, legitimate uses of works wouldn’t get the reasonable consideration they deserve. Even if content-recognizing technology were airtight, computers would still not be able to consider a work’s fair use status.

Again and again, certain powerful content owners seek to brush aside the importance of fair use, characterizing it as a loophole in copyright law or an old-fashioned relic. But without fair use, copyright isn’t compatible with the First Amendment. Do you trust a computer to make the final determination on your right to free speech?

We're taking part in Copyright Week, a series of actions and discussions supporting key principles that should guide copyright policy. Every day this week, various groups are taking on different elements of the law, and addressing what's at stake, and what we need to do to make sure that copyright promotes creativity and innovation.

We're taking part in Copyright Week, a series of actions and discussions supporting key principles that should guide copyright policy. Every day this week, various groups are taking on different elements of the law, and addressing what's at stake, and what we need to do to make sure that copyright promotes creativity and innovation.