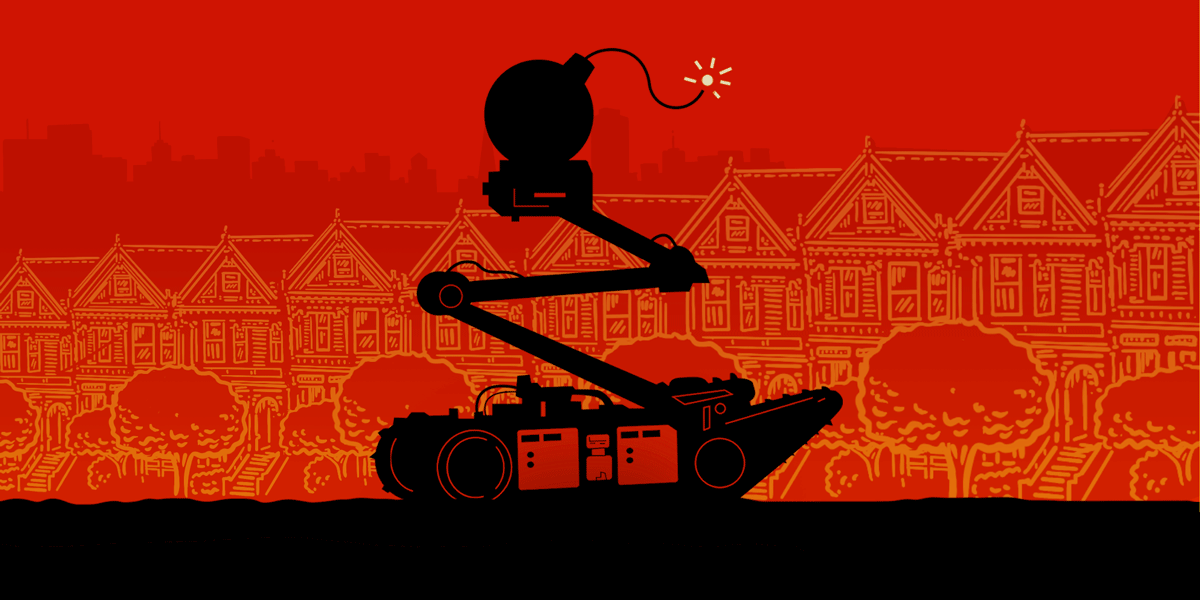

The San Francisco Board of Supervisors on Nov. 29 voted 8 to 3 to approve on first reading a policy that would formally authorize the San Francisco Police Department to deploy deadly force via remote-controlled robots. The majority fell down the rabbit hole of security theater: doing anything to appear to be fighting crime, regardless of whether or not it has any tangible effect on public safety.

These San Francisco supervisors seem not only willing to approve dangerously broad language about when police may deploy robots equipped with explosives as deadly force, but they are also willing to smear those who dare to question its possible misuses as sensationalist, anti-cop, and dishonest.

EMAIL YOUR SUPERVISOR: DON'T LET SFPD ARM ROBOTS

When can police send in a deadly robot? According to the policy: “The robots listed in this section shall not be utilized outside of training and simulations, criminal apprehensions, critical incidents, exigent circumstances, executing a warrant or during suspicious device assessments.” That’s a lot of events: all arrests and all searches with warrants, and maybe some protests.

When can police use the robot to kill? After an amendment proposed by Supervisor Aaron Peskin, the policy now reads: “Robots will only be used as a deadly force option when [1] risk of loss of life to members of the public or officers is imminent and [2] officers cannot subdue the threat after using alternative force options or de-escalation tactics options, **or** conclude that they will not be able to subdue the threat after evaluating alternative force options or de-escalation tactics. Only the Chief of Police, Assistant Chief, or Deputy Chief of Special Operations may authorize the use of robot deadly force options.”

The “or” in this policy (emphasis added) does a lot of work. Police can use deadly force after “evaluating alternative force options or de-escalation tactics,” meaning that they don’t have to actually try them before remotely killing someone with a robot strapped with a bomb. Supervisor Hillary Ronen proposed an amendment that would have required police to actually try these non-deadly options, but the Board rejected it.

The Board majority failed to address the many ways that police have used and misused technology, military equipment, and deadly force over recent decades.

Supervisors Ronen, Shamann Walton, and Dean Preston did a great job pushing back against this dangerous proposal. Police claimed this technology would have been useful during the 2017 Las Vegas mass shooting, in which the shooter was holed up in a hotel room. Supervisor Preston responded that it probably would not have been a good idea to detonate a bomb inside a hotel.

The police department representative also said the robot might be useful in the event of a suicide bomber. But exploding the robot’s bomb could detonate the suicide bomber’s device, thus fulfilling the terrorist’s aims. After common sense questioning from their peers, pro-robot supervisors dismissed concerns as being motivated by ill-formed ideas of “robocops.”

The Board majority failed to address the many ways that police have used and misused technology, military equipment, and deadly force over recent decades. They seem to trust that police would roll out this type of technology only in the absolutely most dire circumstances, but that’s not what the policy says. They ignore the innocent bystanders and unarmed people already killed by police using other forms of deadly force only intended to be used in dire circumstances. They didn’t account for the militarization of police response to protesters, such as the Minneapolis demonstration with overhead surveillance of a predator drone.

The fact is, police technology constantly experiences mission creep–meaning equipment reserved only for specific or extreme circumstances ends up being used in increasingly everyday or casual ways. This is why President Barack Obama in 2015 rolled back the Department of Defense’s 1033 program which had handed out military equipment to local police departments. He said at the time police must “embrace a guardian—rather than a warrior— mind-set to build trust and legitimacy both within agencies and with the public.”

Supervisor Rafael Mandleman smeared opponents of the bomb-carrying robots as “anti-cop,” and unfairly questioned the professionalism of our friends at other civil rights groups. Nonsense. We are just asking why police need new technologies and under what circumstances they actually would be useful. This echoes the recent debate in which the Board of Supervisors enabled police to get live access to private security cameras, without any realistic scenario in which it would prevent crime. This is disappointing from a Board that in 2019 made San Francisco the first municipality in the United States to ban police use of face recognition.

We thank the strong coalition of concerned residents, civil rights and civil liberties activists, and others who pushed back against this policy. We’d also appreciate Supervisors Walton, Preston, and Ronen for their reasoned arguments and commonsense defense of the city’s most vulnerable residents, who too are harmed by police violence.

Fortunately, this fight isn’t over. The Board of Supervisors needs to vote again on this policy before it becomes effective. If you live in San Francisco, please tell your Supervisor to vote “no.” You can find an email contact for your Supervisor here, and determine which Supervisor to contact here. Here's text you can use (or edit):

Do not give SFPD permission to kill people with robots. There are many alternatives available to police, even in extreme circumstances. Police equipment has a documented history of misuse and mission creep. While the proposed policy would authorize police to use armed robots as deadly force only when the risk of death is imminent, this legal standard has often been under-enforced by courts and criticized by activists. For the sake of your constituents' rights and safety, please vote no.

EMAIL YOUR SUPERVISOR: DON'T LET SFPD ARM ROBOTS