Readers of these pages will be familiar with the debate going on between government officials and technologists around the world about law enforcement’s perceived need to access the content of any and all encrypted communications.1

In this in-depth post, we’ll discuss why—in order to be effective—any legal mandate requiring cryptographic communications systems to be designed to retain the ability to provide law enforcement “exceptional access” to encrypted content would violate the First Amendment.

Last month, the Washington Post’s editorial board doubled down on the side of exceptional access, suggesting that Silicon Valley’s “paragons of innovation” ought to more clearly “acknowledge the legitimate needs” of law enforcement—and presumably give the FBI exactly what it’s asking for. That suggestion came even after the editorial board acknowledged that both industry and academic experts uniformly tell us that giving the government exceptional access to our data would be a dangerous idea from a cryptographic perspective, putting our security at significant risk.

And just this week, in the New York Times, law enforcement officials from New York, London, Paris, and Madrid published a similarly flawed op-ed discussing device encryption, using misleading anecdotes in an attempt to frighten the public into accepting their vision of total surveillance through undermined crypto.

But a mandate that developers weaken encryption systems to suit the whim of law enforcement isn’t just a technically bad idea; any such mandate would necessarily be either ineffective or unconstitutional.

What would an “exceptional access” mandate actually mean?

Although no legislation has yet been proposed, government officials such as FBI director James Comey have repeated their position enough over the last several months to make it clear, if not logically consistent: the FBI says its supports “strong encryption,” but it wants the ability to read any and all encrypted messages if it has the proper legal authority.

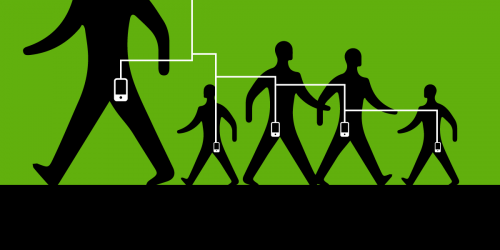

If the government really is serious about creating a legislative requirement that law enforcement always be able to access the content of a communication, simply requiring companies like Apple to redesign their systems won’t be enough. Why? Because every terrorist, pedophile, mafioso, and run-of-the-mill crook will be able to simply stop using iMessage or WhatsApp and turn instead to one of the many apps that implement end-to-end cryptography without the FBI’s hypothetical golden key. Or they could simply use strong encryption protocols like OTR2 on top of other messaging services.

Back in the 1990s when Congress passed CALEA, the overwhelming majority of our communications went through centralized service providers—mostly phone companies. CALEA’s mandate that phone companies make it possible for law enforcement to wiretap their customers was in large part effective, because there wasn’t much else people could use to communicate.

But the app economy has changed all that. Today, centralized service providers aren’t the only option for communications applications; instead you often have a range of options for communicating on a given service or platform. Take for example ChatSecure, a mobile app that implements OTR. ChatSecure, like nearly every OTR implementation, doesn’t depend on any specific service provider and indeed is designed to add end-to-end encryption to other providers’ unencrypted chat services. A mandate that the provider of the chat service, e.g., Google Chat, be able to provide plaintext on demand would be rendered meaningless for anyone using ChatSecure. There is no way that such providers can do so, because they don’t have access to the keys.

As Stanford computer scientist, lawyer, and former EFF intern Jonathan Mayer put it:

In order to believe that [exceptional access] will work, we have to believe there is a set of criminals . . . not smart enough to do any of the following:

· Install an alternative storage or messaging app.

· Download an app from a website instead of an official app store.

· Use a web-based app instead of a native mobile app.

It’s difficult to believe that many criminals would fit the profile.

Meanwhile, members of the technical community have been clear that government calls for exceptional access are exceptionally dangerous from a cryptographic perspective. Any system that allows the government access to encrypted communications would entail the need for third parties to hold cryptographic materials or the plaintext of messages. As a recent paper by an all-star cast of computer scientists and security researchers explained, this is highly risky because it increases system complexity and provides juicy targets for attackers.

The technological problems with safely implementing escrowed or split-key crypto should be enough to end this so-called debate now. However, from a legal perspective, an exceptional access mandate is more objectionable for what it would do to the cutting edge of cryptographic development: stop it dead.

What does the First Amendment have to say about a crypto mandate?

In spite of all the fervent op-eds, the government seems reluctant to actually put forward a proposal for an exceptional access mandate. That may well be because this law would act as what’s known as a “prior restraint.” Prior restraints are almost never permissible under the First Amendment, so a crypto mandate would be highly vulnerable to constitutional challenge. EFF has worked to establish and strengthen First Amendment protections for encryption, and we’d welcome the opportunity to take that case. In the rest of this section, we’ll explain why we think we’d win.

What is a prior restraint?

A prior restraint is a government action that prevents people from speaking or publishing before they have a chance to do so. (That’s in contrast to a punishment imposed after someone speaks. Think of a lawsuit for defamation that results in a defendant paying a monetary judgment for something she said about the plaintiff.) Prior restraints have an important place in the history of the First Amendment. In the seventeenth century, operators of printing presses in England were required to obtain licenses from the government in order to publish. As the U.S. Supreme Court explained in 1931 in Near v. Minnesota, the drafters of the Bill of Rights, including notably James Madison, were deeply worried that the new American government might pass similar laws, which the Court called “the essence of censorship.” This is one of the main concerns that led to the First Amendment’s guarantee of freedom of the press, which in the modern era extends beyond the operators of printing presses to all speakers.

Because prior restraints are central to the motivating purpose of the First Amendment, the Supreme Court has been extremely hostile to laws that restrict speech in advance. In fact, no prior restraint considered by the Supreme Court has ever been upheld. Most famously, the Court struck down a lower court’s injunction against the publication of the so-called Pentagon Papers by the New York Times and the Washington Post in 1971 despite the government’s claim that the publication would cause grave harm to national security. Coming out of these cases, prior restraints are said to bear a “heavy presumption” against their constitutionality. Courts often employ a hard-to-meet checklist, under which prior restraints must be (1) necessary to prevent a harm to a governmental interest of the highest order; absent which (2) irreparable harm will definitely occur; (3) no alternative exists; and (4) the prior restraint will actually prevent the harm.

Why is a crypto mandate a prior restraint?

To recap, laws that prevent authors from publishing are almost always unconstitutional. So if we can show that a crypto mandate acts to prevent publication or speech, it’s probably toast. What’s left is the connection between encryption software and free speech. Fortunately, the legal principle that code is speech is near and dear to EFF’s heart. In the 1990’s, we successfully argued Bernstein v. DOJ to the Ninth Circuit Court of Appeals on behalf of cryptographer Daniel J. Bernstein, establishing that laws prohibiting the export of cryptography software without a license were prior restraints, and that software code is expression protected by the First Amendment.3

Because there’s no proposal for a crypto mandate yet on the table, we have to guess at what it might look like. It might be something like CALEA, requiring service providers to architect their systems in such a way as to make exceptional access possible. Some would argue that this might not be a prior restraint, since it only affects what services providers can offer. But this is where the issue of effectiveness becomes paramount. As we described above, it’s naive to think that a mandate aimed only at service providers would prevent criminals from using strong encryption; they’d simply use apps that offer it on top of insecure messaging services, for example.

That’s why in order to be effective, a mandate would have to also sweep in the developers of apps that offer end-to-end encryption, though the government has been reluctant to say that outright. But requiring developers to maintain the capability to provide law enforcement access to all encrypted communications would halt the state of the art of development in end-to-end encryption. For instance, because Moxie Marlinspike and Trevor Perrin’s advanced Axolotl cryptographic ratchet implements forward secrecy and future secrecy, no system implementing that protocol as intended could be permitted.

Put another way, the government would be telling developers they cannot produce software (and publish open source code) that implements features incompatible with exceptional access. To see why that’s a clear prior restraint, imagine the government restricted use of certain emoji. The cactus is cool, but the smiling pile of poop is verboten. Like emoji, code is a form of speech, and publishing code that has certain features would be outlawed.

In light of this simple equation, a law requiring exceptional access would be on very thin ice. The government would have to show that not having the mandate will “result in direct, immediate, and irreparable damage” to national security or safety, in the words of Justice Stewart in the Pentagon Papers case. Many apps offer such features today, so it’s hard to imagine a court seeing this necessity. What’s more, prior restraints must be effective—they must actually work—in order to be constitutional. But given the nature of open source development, even a crypto mandate that applied to apps offering end-to-end encryption would fail to take down every fork of every project, particularly those developed outside the United States.4

Any mandate that would require developers to permit law enforcement “exceptional access” would either be an unconstitutional prior restraint or entirely ineffective.

FBI Director Comey has been crystal clear in one respect: he wants a valid search warrant to result in the return of plaintext, every time, no matter what. He doesn’t particularly care how developers get there; all he knows is he wants the goods. But unlike the last time around when CALEA was passed, secure communications no longer depend on tools developed by service providers. If Apple is forced to backdoor iMessage, everyone interested in privacy and security—including the criminals who most worry Comey—will simply switch to something like OTR, and he will be out of luck. And because banning OTR (or forcing the developers to implement any kind of exceptional access) would amount to a prior restraint, we urge Congress to reject law enforcement’s call for sweeping legislation. But if Congress fails to listen to reason and passes an exceptional access mandate, you can expect to see us challenge it in court.

- 1. This debate often combines and indeed confuses encryption of devices and storage with encryption of communications, which may raise different issues. This post focuses on issues specific to encrypted communications

- 2. OTR, short for “off-the-record,” is a protocol that allows people to have truly end-to-end encrypted communications over otherwise unencrypted channels like Google Chat or Facebook Messenger.

- 3. Although the Ninth Circuit later withdrew its opinion in Bernstein, the lower court’s opinion remains good law, and the Sixth Circuit reached similar conclusions in 2000 in Junger v. Daley.

- 4. A similar analysis would also apply to an argument that a crypto mandate is a so-called content-based restriction on speech that fails strict scrutiny.