It’s been a joke for years now, from the days when Facebook was just a website where you said you were eating a sandwich and Instagram was just where you posted photos of said sandwich, but, right now, we really are living our everyday lives online. Teachers are trying to teach classes online, librarians are trying to host digital readings, and trainers are trying to offer home classes.

With more people entering the online world, more people are encountering the barriers created by copyright. Now is no time to make those barriers higher, but a new petition directed at tech companies does exactly that, and in the process tries to do for the US what Article 17 of last's year's European Copyright Directive is doing for Europe—create a rule requiring online service providers to send everything we post to the Internet to black-box machine learning filters that will block anything that the filters classify as "copyright infringement."

The petition from musical artists, calls on companies to “reduce copyright infringement by establishing ‘standard technical measures.’” The argument is that, because of COVID-19, music labels and musicians cannot tour and, therefore, are having a harder time making up for losses due to online copyright infringement. So the platforms must do more to prevent that infringement.

Musical artists are certainly facing grave financial harms due to COVID-19, so it’s understandable that they’d like to increase their revenue wherever they can. But there are at least three problems with this approach, and each creates a situation which would cause harm for Internet users and wouldn’t achieve the ends musicians are seeking.

First, the Big Tech companies targeted by the petition already employ a wide variety of technical measures in the name of blocking infringement, and long experience with these systems has proven them to be utterly toxic to lawful, online speech. YouTube even warned that this current crisis would prompt even more mistakes, since human review and appeals were going to be reduced or delayed. It has, at least, decided not to issue strikes except where it has "high confidence" that there was some violation of YouTube policy. In a situation where more people than before are relying on these platforms to share their legally protected expression, we should, if anything, be looking to lessen the burden on users, not increase it. We should be looking to make them fairer, more transparent, and appeals more accessible, not adding more technological barriers.

YouTube’s Content ID tool has flagged everything from someone speaking into a mic to check the audio to a synthesizer test. Scribd’s filter caught and removed a duplicate upload of the Mueller report, despite the fact that anything created by a federal government employee as part of their work can’t even be copyrighted. Facebook’s Rights Manager keeps flagging its users' performances of classical music composed hundreds of years ago. Filters can't distinguish lawful from unlawful content. Human beings need to review these matches.

But they don’t. Or if they do, they aren’t trained to distinguish lawful uses. Five rightsholders were happy to monetize ten hours of static because Content ID matched it. Sony refused the dispute by one Bach performer, who only got his video unblocked after leveraging public outrage. A video explaining how musicologists determine whether one song infringes on another was taken down by Content ID, and the system was so confusing that law professors who are experts in intellectual property couldn’t figure out the effect of the claim in their account if they disputed it. They only got the video restored because they were able to get in touch with YouTube via their connections. Private connections, public outrage, and press coverage often get these bad matches undone, but they are not a substitute for a fair system.

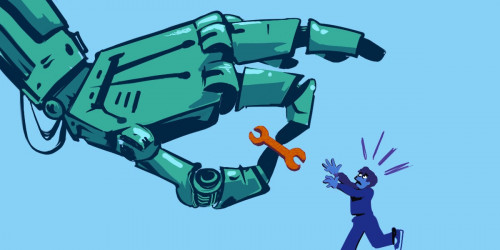

Second, adding more restrictions will make making and sharing our common culture harder at a time when, if anything, it needs to be easier. We should not require everyone online become experts in law and the specific labyrinthine policies of a company or industry just when whole new groups of people are transferring their lives, livelihoods, and communities to the Internet.

If there's one lesson recent history has taught us, it's that "temporary, emergency measures" have a way of sticking around after the crisis passes, becoming a new normal. For the same reason that we should be worried about contact tracing apps becoming a permanent means for governments to track and control whole populations, we should be alarmed at the thought that all our online lives (which, during the quarantine, are almost our whole lives) will be subjected to automated surveillance, judgment and censorship by a system of unaccountable algorithms operated by massive corporations where it's impossible to get anyone to answer an email.

Third, this petition appears to be a dangerous step toward the content industry’s Holy Grail: manufacturing an industry consensus on standard technical measures (STMs) to police copyright infringement. According to Section 512 of the Digital Millennium Copyright Act (DMCA), service providers must accommodate STMs in order to receive the safe harbor protections from the risk of crippling copyright liability. To qualify as an STM, a measure must (1) have been developed pursuant to a broad consensus in an “open, fair, voluntary, multi-industry standards process”; (2) be available on reasonable and nondiscriminatory terms; and (3) cannot impose substantial costs on service providers. Nothing has ever met all three requirements, not least because no “open, fair, voluntary, multi-industry standards process” exists.

Many in the content industries would like to change that, and some would like to see U.S. follow the EU in adopting mandatory copyright filtering. The EU’s Copyright Directive—also known as Article 17, the most controversial part —passed a year ago, but only one country has made progress towards implementing it [pdf]. Even before the current crisis, countries were having trouble reconciling the rights of users, the rights of copyright holders, and the obligations of platforms into workable law. The United Kingdom took Brexit as a chance not to implement it. And requiring automated filters in the EU runs into the problem that the EU has recognized the danger of algorithms by giving users the right not to be subject to decisions made by automated tools.

Put simply, the regime envisioned by Article 17 would end up being too complicated and expensive for most platforms to build and operate. YouTube's Content ID alone has cost $100,000,000 to date, and it just filters videos for one service. Musicians are 100 percent right to complain about the size and influence of YouTube and Facebook, but mandatory filtering creates a world in which only YouTube and Facebook can afford to operate. Cementing Big Tech’s dominance is not in the interests of musicians or users. Mandatory copyright filters aren't a way to control Big Tech: they're a way for Big Tech to buy Perpetual Internet Domination licenses that guarantee that they need never fear a competitor.

Musicians are under real financial stress due to COVID-19, and they are not incorrect to see something wrong with just how much of the world is in the hands of Big Tech. But things will not get better for them or for users by entrenching its position or making it harder to share work online.