Google’s handling of a recent decision by the European Court of Justice (ECJ) that allows for Europeans to request that public information about them be deleted from search engine listings is causing frustration amongst privacy advocates. Google—which openly opposed interpreting Europe’s data protection laws as including the removal of publicly available information—is being accused by some of intentionally spinning the ECJ’s ruling to appear ‘unworkable’, while others—such as journalist Robert Peston—have expressed dissatisfaction with the ECJ ruling itself.

The issue with the ECJ judgement isn't European privacy law, or the response by Google. The real problem is the impossibility of an accountable, transparent, and effective censorship regime in the digital age, and the inevitable collateral damage borne of any attempt to create one, even from the best intentions. The ECJ could have formulated a decision that would have placed Google under the jurisdiction of the EU’s data protection law, and protected the free speech rights of publishers. Instead, the court has created a vague and unappealable model, where Internet intermediaries must censor their own references to publicly available information in the name of privacy, with little guidance or obligation to balance the needs of free expression. That won’t work in keeping that information private, and will make matters worse in the global battle against state censorship.

Google may indeed be seeking to play the victim in how it portrays itself to the media in this battle, but Google can look after itself. The real victims in this battle lie further afield, and should not be ignored.

The first victim of Google’s implementation of the ECJ decision is transparency under censorship. Back in 2002—in the wake of bad publicity following the company’s removal of content critical of the Church of Scientology—Google established a policy of informing users when content was missing from search engine results. This, at least, gave some visibility when data was hidden away from them. Since then, whenever content has been removed from a Google search, the company has posted a message at the bottom of each search page notifying its users, and if possible they’ve passed the original legal order to Chilling Effects. Even during its ill-considered collaboration with Chinese censors, Google maintained this policy of disclosure to users; indeed, one of the justifications the company gave for working in China is that Chinese netizens would know when their searches were censored.

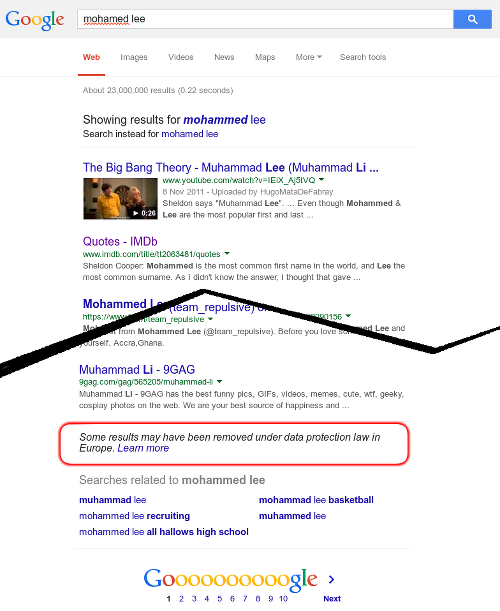

Right to be Non-Existent: Google warns of potential removals, even when the person you've searched for doesn't exist.

Google's implementation of the ECJ decision has profoundly diluted that transparency. While the company will continue to place warnings at the bottom of searches, those warnings have no connection to deleted content. Rather, Google is now placing a generic warning at the bottom of any European search that it believes is a search for an individual name, whether or not content related to that name has been removed.

Google’s user notification warnings have now been rendered useless for providing any clear indication of censored content. (As an aside, this means that Google is also now posting warnings based on what its algorithms think "real names" look like—even though these determinations are notoriously inaccurate, as we pointed out during Google Plus's Real Names fiasco.)

The second victim of Google’s ECJ implementation is fairness. After Google informed major news media like the Guardian UK and BBC that they were being censored, those sites noted—correctly—that legitimate journalism was being silenced. Google subsequently restored some of the news stories it had been told to remove. Will Google review its decisions when smaller media, such as bloggers, complain? Or does the power to undo censorship remain only with the traditional press and their bully pulpit? Even the flawed DMCA takedown procedure includes a legally defined path for appealing the removal of content. For now it seems that restorations will rely not on a legal right for publishers to appeal, but rather on the off chance that intermediaries like Google will assume the risk of legal penalties from data protection authorities, and restore deleted links.

Which brings us to the third victim: Europe's privacy law itself. Europe's privacy regime has long been a model for effective and reasonable governance of privacy. Its recent updated data protection regulation provides an opportunity for the European Union to define how the right to privacy can be defended by governments in the modern digital era.

Tying the data protection regulation to censorship risks discrediting its aims and impugning its practicality.

“Minor” censorship is still censorship

Before the Google Spain vs. Gonzalez was decided by the ECJ, the court’s advisor, Advocate General Jääskinen, spelled out a reasonable model for deciding the case which would have placed Google and other non-European companies as liable to follow EU privacy law, but would not have required the deletion or hiding of public knowledge. Instead, the court gave credence to the idea that public censorship had a place in “fixing” privacy violations.

Some of the arguments in favor of the ECJ censorship model are reminiscent of other attempts to aggressively block and filter data, including the ongoing regulatory battles against online copyright infringement. While it can be argued that the latest removals are “hugely less significant than the restrictions that Google voluntarily imposes for copyright and other content,” they are no less insidious. Every step down the road of censorship is damaging. And when each step proves—as it did with copyright—to be ineffective in preventing the spread of information, the pressure grows to take the next, more repressive, step.

Currently the EU’s requirement on Google to censor certain entries can easily be bypassed by searching on Bing, say, or by using the US Google search, or by appending extra search terms than simply a name. That is not surprising. Online censorship can almost always be circumvented. Turkey’s ban on Twitter was equally useless, but extremely worrying nonetheless. Even Jordan’s draconian censorship of news sites that fail to register for licenses has been bypassed using Facebook...but should be condemned on principle regardless.

And a fundamentally unenforceable law is guaranteed to be the target of calls for increasingly draconian enforcement, as the legal system attempts to sharpen it into effectiveness. If Bing is touted as an alternative to Google, then the pressure will grow on Bing to perform the same service (Microsoft says it is already preparing a similar deletion service). Europe’s data protection administrators may grow unhappy that simply searching on google.com instead of google.fr or google.co.uk will reveal what was meant to be forgotten, and—as Canada's courts have already demanded—order search engines to delete data globally.

At the very least, European regulators need to stop thinking that handing over the reins of content regulation to the Googles and Facebooks of this world will lead anywhere good. The intricacies of privacy law need to be supervised by regulators, not paralegals in tech corporations. Restrictions on free expression need to be considered, in public, by the courts, on a case-by-case basis, and with both publishers and plaintiffs represented, not via an online form and the occasional outrage of a few major news sources. And online privacy needs better protection than censorship, which doesn't work, and causes so much more damage than it prevents.