Facebook Treats Punk Rockers Like Crazy Conspiracy Theorists, Kicks Them Offline

Facebook announced last year that it would be banning followers of QAnon, the conspiracy theorists that allege that a cabal of satanic pedophiles is plotting against former U.S. president Donald Trump. It seemed like a case of good riddance to bad rubbish.

Members of an Oakland-based punk rock band called Adrenochrome were taken completely by surprise when Facebook disabled their band page, along with all three of their personal accounts, as well as a page for a booking business run by the band’s singer, Gina Marie, and drummer Brianne.

Marie had no reason to think that Facebook’s content moderation battle with QAnon would affect her. The strange word (which refers to oxidized adrenaline) was popularized by Hunter Thompson in two books from the 1970s. Marie and her bandmates, who didn’t even know about QAnon when they named their band years ago, picked the name as a shout-out to a song by a British band from the 80’s, Sisters of Mercy. They were surprised as anyone that in the past few years, QAnon followers copied Hunter Thompson’s (fictional) idea that adrenochrome is an intoxicating substance, and gave this obscure chemical a central place in their ideology.

The four Adrenochrome band members had nothing to do with the QAnon conspiracy theory and didn’t discuss it online, other than receiving occasional (unsolicited and unwanted) Facebook messages from QAnon followers confused about their band name.

But on Jan. 29, without warning, Facebook shut down not just the Adrenochrome band page, but the personal pages of the three band members who had Facebook accounts, including Marie, and the page for the booking business.

“I had 2,300 friends on Facebook, a lot of people I’d met on tour,” Marie said. “Some of these people I don’t know how to reach anymore. I had wedding photos, and baby photos, that I didn’t have copies of anywhere else.”

False Positives

The QAnon conspiracy theory became bafflingly widespread. Any website host—whether it’s comments on the tiniest blog, or a big social media site—is within its rights to moderate that QAnon-related content and the users who spread it. Can Facebook really be blamed for catching a few innocent users in the net that it aimed at QAnon?

Yes, actually, it can. We know that content moderation, at scale, is impossible to do perfectly. That’s why we advocate companies following the Santa Clara Principles: a short list of best practices, that include numbers (publish them), notice (provide it to users in a meaningful way), and appeal (a fair path to human review).

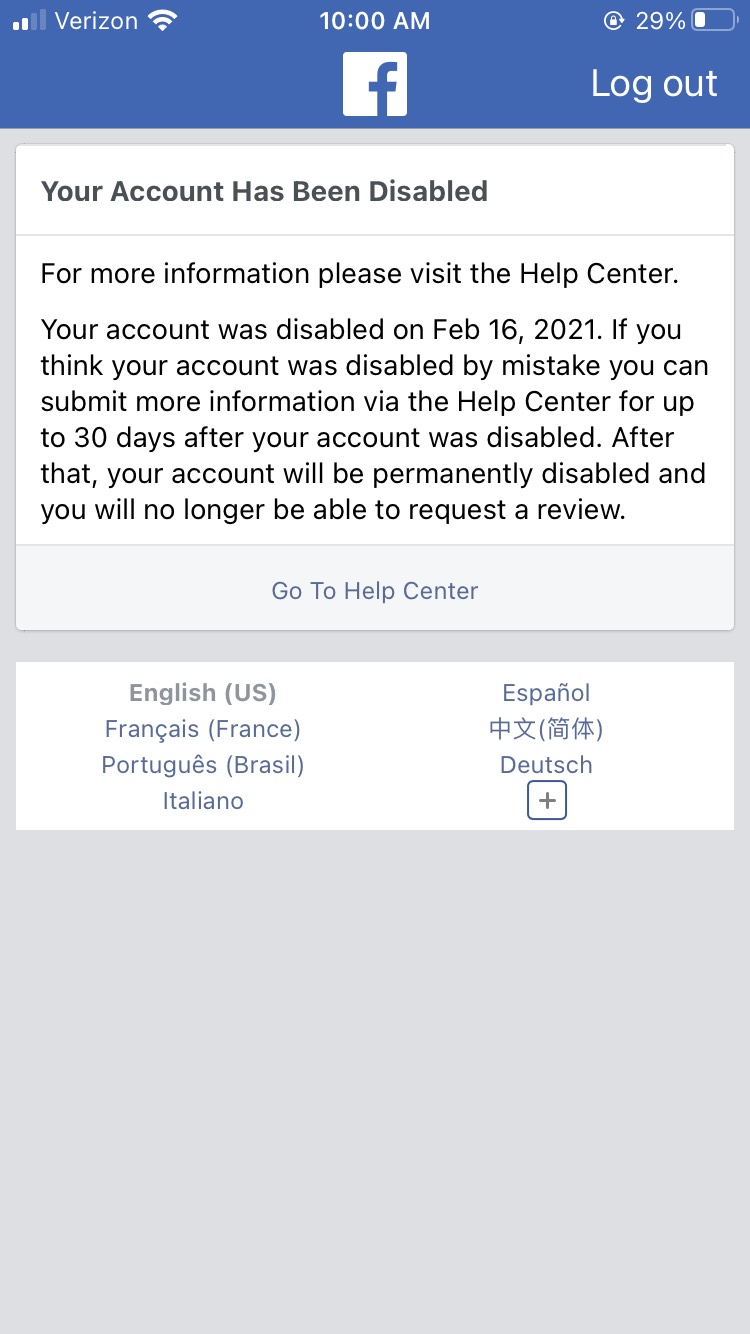

Facebook didn’t give Marie and her bandmates any reason that her page went down, leaving them to just assume it was related to their band’s name. They also didn’t provide any mechanism at all for appeal. All she got was a notice (screenshot below) telling her that her account was disabled, and that it would be deleted permanently within 30 days. The screenshot said “if you think your account was disabled by mistake, you can submit more information via the Help Center.” But Marie wasn’t able to even log in to the Help Center to provide this information.

Ultimately, Marie reached out to EFF and Facebook restored her account on February 16, after we appealed to them directly. But then, within hours, Facebook disabled it again. On February 28, after we again asked Facebook to restore her account, it was restored.

We asked Facebook why the account went down, and they said only that “these users were impacted by a false positive for harmful conspiracy theories.” That was the first time Marie had been given any reason for losing access to her friends and photos.

That should have been the end of it, but on March 5 Marie’s account was disabled for a third time. She was sent the exact same message, with no option to appeal. Once more we intervened, and got her account back—we hope, this time, for good.

This isn’t a happy ending. First, users shouldn’t have to reach out to an advocacy group in the first place to get help in challenging a basic account disabling. One hand wasn’t talking to the other, and Facebook couldn’t seem to stop this wrongful account termination.

Second, Facebook still hasn’t provided any meaningful ability to appeal—or even any real notice, something they explicitly promised to provide in our 2019 “Who Has Your Back?” report.

Facebook is the largest social network online. They have the resources to set the standard for content moderation, but they're not even doing the basics. Following the Santa Clara Principles—Numbers, Notice, and Appeal—would be a good start.